Key Points

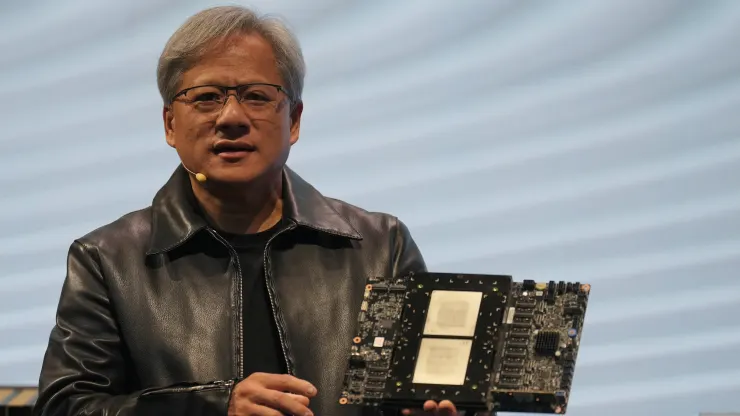

- Nvidia on Monday unveiled the H200, a graphics processing unit designed for training and deploying the kinds of artificial intelligence models powering the generative AI boom.

- The H200 includes 141GB of next-generation “HBM3” memory that will help it generate text, images or predictions using AI models.

- Interest in Nvidia’s AI GPUs has supercharged the company, with sales expected to surge 170% this quarter.

Nvidiaon Monday unveiled the H200, a graphics processing unit designed for training and deploying the kinds of artificial intelligence models that are powering the generative AI boom.

The new GPU is an upgrade from the H100, the chip OpenAI used to train its most advanced large language model, GPT-4. Big companies, startups and government agencies are all vying for a limited supply of the chips.

H100 chips cost between $25,000 and $40,000, according to an estimate from Raymond James, and thousands of them working together are needed to create the biggest models in a process called “training.”

Excitement over Nvidia’s AI GPUs has supercharged the company’s stock, which is up more than 230% so far in 2023. Nvidia expects around $16 billion of revenue for its fiscal third quarter, up 170% from a year ago.

The key improvement with the H200 is that it includes 141GB of next-generation “HBM3” memory that will help the chip perform “inference,” or using a large model after it’s trained to generate text, images or predictions.

Nvidia said the H200 will generate output nearly twice as fast as the H100. That’s based on a test using Meta’s

Llama 2 LLM.

The H200, which is expected to ship in the second quarter of 2024, will compete with AMD’s MI300X GPU. AMD’s

chip, similar to the H200, has additional memory over its predecessors, which helps fit big models on the hardware to run inference.

Nvidia said the H200 will be compatible with the H100, meaning that AI companies who are already training with the prior model won’t need to change their server systems or software to use the new version.

Nvidia says it will be available in four-GPU or eight-GPU server configurations on the company’s HGX complete systems, as well as in a chip called GH200, which pairs the H200 GPU with an Arm-based processor.

However, the H200 may not hold the crown of the fastest Nvidia AI chip for long.

While companies like Nvidia offer many different configurations of their chips, new semiconductors often take a big step forward about every two years, when manufacturers move to a different architecture that unlocks more significant performance gains than adding memory or other smaller optimizations. Both the H100 and H200 are based on Nvidia’s Hopper architecture.

In October, Nvidia told investors that it would move from a two-year architecture cadence to a one-year release pattern due to high demand for its GPUs. The company displayed a slide suggesting it will announce and release its B100 chip, based on the forthcoming Blackwell architecture, in 2024.

Credit: CNBC